-

Converting a SQL Server .bacpac to a .dacpac

Microsoft SQL Server has two related portable file formats - the DACPAC and the BACPAC. Quoting Data-tier Applications:

A DAC is a self-contained unit of SQL Server database deployment that enables data-tier developers and database administrators to package SQL Server objects into a portable artifact called a DAC package, also known as a DACPAC. A BACPAC is a related artifact that encapsulates the database schema as well as the data stored in the database.

When they say related, they’re not kidding! Both of these files formats are based on the Open Packaging Conventions (a fancy way of saying it’s a .zip file with some other bits), and cracking them open you discover that a bacpac file is basically a dacpac with a few extra files and a couple of different settings. Knowing this, it should be possible to manually convert a bacpac to a dacpac.

First, unzip the .bacpac file (using 7-zip, or rename to .zip and use Windows File Explorer’s Extract Archive).

Now do the following actions (you could do these programmatically if this is something you need to do repeatedly):

- Edit model.xml

- Change DataSchemaModel@SchemaVersion to 2.4

- Edit Origin.xml

- Change ContainsExportedData to false

- Change ModelSchemaVersion to 2.4

- Remove ExportStatistics

- Recalculate the SHA256 checksum for model.xml and update the value stored in

Checksums/Checksum@Uri=’/model.xml’

- Remove directories

\_relsand Data

Now re-zip up the remaining files and change the file suffix back to .dacpac

To verify that the .dacpac is valid, try using SSMS with the Upgrade Data-tier Application wizard. Run it against any database and if you can proceed to without error to the “Review Upgrade Plan” step, you should be good to go.

- Edit model.xml

-

Create a temporary file with a custom extension in PowerShell

Just a quick thing I wanted to record for posterity. The trick is using the

-PassThruparameter with theRename-Itemcmdlet so that this ends up a one-liner thanks to PowerShell’s pipeline:$tempNuspec = Get-ChildItem ([IO.Path]::GetTempFileName()) | Rename-Item -NewName { [IO.Path]::ChangeExtension($_, ".nuspec") } -PassThru -

Microsoft LifeCam Studio stops working with Windows 10

I have a Microsoft LiveCam Studio webcam that I bought a few years ago for the Adelaide .NET User Group for when we have remote presenters. It’s been pretty good (although not long after I bought it, Scott Hanselman tweeted that actually the Logitech 930e was worth considering with possibly a wider shot).

I went to use it the other day, and it just plain refused to work. My laptop has a builtin webcam and that was showing up, but using any app (eg. Microsoft Teams or the Windows Camera app) just wasn’t showing the LifeCam. It was strange as it did show up as an audio device, but not video.

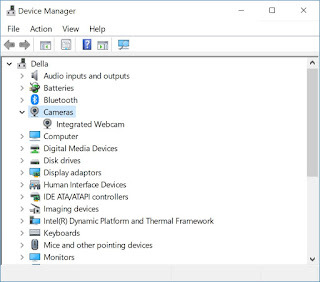

I brought up Device Manager, and looked in the Cameras node, but it wasn’t there. I tried unplugging it and re-plugging back in (and rebooting Windows) to no avail.

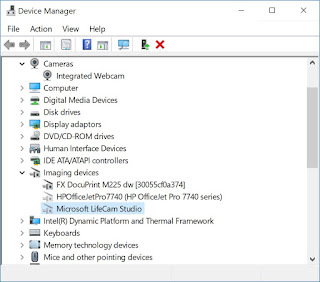

I then tried the webcam with a different PC, and it worked, so at least I knew the device wasn’t faulty. Firing up Device Manager on the second PC revealed something interesting though. The LifeCam wasn’t under Cameras, it was listed under Imaging devices. Who would have guessed!

Switching back to my laptop, in Device Manager, I went to the View menu and selected Show hidden devices. Looking under the Imaging devices revealed something unexpected. There were two device drivers listed for the LifeCam! I right-clicked on both devices and selected Uninstall device.

I then plugged the webcam back into the laptop, and now Windows registered that a new device was attached and indicated it was installing the device drivers. After a short wait, it was now working correctly!

Mystery solved 😁