-

Building with GitHub Actions

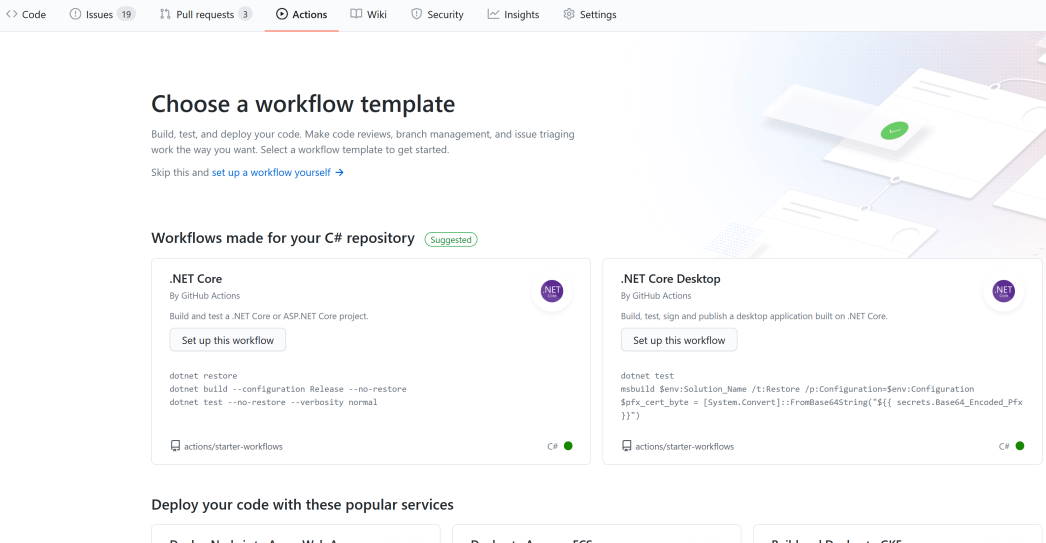

Adding a GitHub Action to a repository is pretty easy. You can use the Web UI and click on the Actions menu and select one of the builtin workflows. If you’ve use Azure Pipelines before, then things will feel kind of similar. Both use YAML as the format for describing build workflows, though there are subtle differences.

Using the Web UI is a good way to get started, plus you can use the search field to look for suitable actions. It is just a file though, so you can edit it and commit it just like any other file.

Each workflow is stored in a separate file. These files reside in the special

.github/workflowsdirectory.Here’s a simple workflow:

name: CI on: push: branches: [ master ] jobs: build: runs-on: windows-latest steps: - uses: actions/checkout@v2 with: fetch-depth: 0 - name: nuget restore run: nuget restore -Verbosity quiet - name: setup-msbuild uses: microsoft/setup-msbuild@v1 - name: Build id: build run: | msbuild /p:configuration=Release /p:DeployExtension=false /p:ZipPackageCompressionLevel=normal /v:mThe

onis the trigger which will cause this workflow to run. Common triggers includepushorpull_request, and you can filter triggers to only fire on specific branches. The full list of available triggers are listed in Webhook events.Under

jobs, you can list one or more jobs. Each job can run on a runner (build agent). Runners can be ones provided by GitHub or yourself. The GitHub hosted runners actually have the same installed software as the Azure Pipelines hosted agents.A job (eg.

buildin the example above) also lists actions under thestepssection. There’s a number of actions provided by GitHub and lots of 3rd party actions too. It’s pretty easy to create a new action. In a future post I’ll give an overview of the custom action I created.The above workflow does a git checkout (with full history), then restores NuGet packages, ensures

msbuildis available and then runsmsbuild.Once your workflow file is committed to the repository, you can view the execution history, or edit the workflow through the Actions tab.

-

CI/CD for Visual Studio extensions with GitHub Actions

An inclement weekend has given me a chance to spend some time improving the build and publish story for one of my Visual Studio extensions - Show Missing, and along the way I’ve learned a lot about using GitHub Actions to implement a nice CI/CD story.

I previously had set up AppVeyor and Azure Pipelines configurations to build this project. I thought this would be a good opportunity to consolidate automated build and deployment using GitHub Actions and add some nice enhancements to the process along the way.

The project source is located in this repository on GitHub. I’m going to blog about each of the changes I made. At this stage I’m planning to cover:

- Building with GitHub Actions

- Optimise build with caching

- Adding Dependabot

- Create releases

- Attach binary to release

- Create a new GitHub Action for deleting release assets

- Publish release to the Visual Studio Marketplace

I’ll add links to subsequent posts as they are published.

-

Work from home update

I started working from home back on March 16th, so it’s been around 14 weeks since I’ve worked in our office in Adelaide CBD.

My normal weekday routine is getting up (usually earlier than I’d prefer), breakfast, shower and some stretches and exercises. The exercises were given to me by the physiotherapist after I injured my back last year playing basketball. While I haven’t returned to playing competitively, I figure it’s worth it for my own health to keep them up. A friend on Facebook was challenging folks to try and do 30 push ups a day for 30 days. I usually ignore these kinds of things (and don’t repost them), but in this case I figured it was worth adding to my list. I’ve slowly been building up and hope to keep doing it for the long term.

Around the time of the school run (or a bit before) I head out for a morning walk. Most days I’ve been doing a 5km loop around the neighbourhood (weather permitting), which takes around 60 minutes. It takes the place of my walk to the bus and commute into town that I would previously have done. A great chance to get some fresh air and get the blood circulating, listen to podcasts (Daily Audio Bible followed by various technical podcasts), and prepare for the day ahead.

.

.The mornings can be chilly when I’m out walking, so my pullover with these sleeves that let you put your thumb through the collar are handy. I originally thought the hole was a defect, but later I realised it was intended all along and is a handy feature!

Work follows mostly business hours, though a few weeks ago we did have a number of evening conference calls and training sessions. That is an ongoing challenge working for a global company - finding a time that’s convenient for most people to attend.

I usually take my own lunch to work, but it is extra nice to be able to put a bit of extra effort into a homemade sandwich! Yes, that fruit is home grown too.

Being able to focus with less interruptions is a big bonus. I can also choose to put some background music on. Double J Radio is my station of choice.

Wrap up the work day around 5-6pm and then see what’s happening with the rest of the family. It is nice to be home when they all get home from school. Sometimes there’s some hot chips as an after-school treat so it’s nice to be around to enjoy that!

There’s been some discussions about returning to the office in the future. South Australia has had a total of 440 cases of COVID (with just 4 deaths) and no new cases in the last 4 weeks which is very encouraging.

I’m in no particular rush though. I’ve generally enjoyed my time working at home. I don’t miss the 2 hours I was spending commuting each day. I feel like I think I’d like to keep doing it going forward (maybe going in to the office 1 day a week)